Mastering ChatGPT Training: Performance Metrics and Real-world Benchmarking

In the digital age, AI chatbots like ChatGPT have become invaluable tools. They’re revolutionizing the way we interact with technology. But how do you train one of these AI marvels?

Training ChatGPT isn’t as daunting as it may seem. With the right guidance and a bit of patience, you can tweak this AI to suit your needs. In this article, I’ll be sharing my insights on how to train ChatGPT effectively.

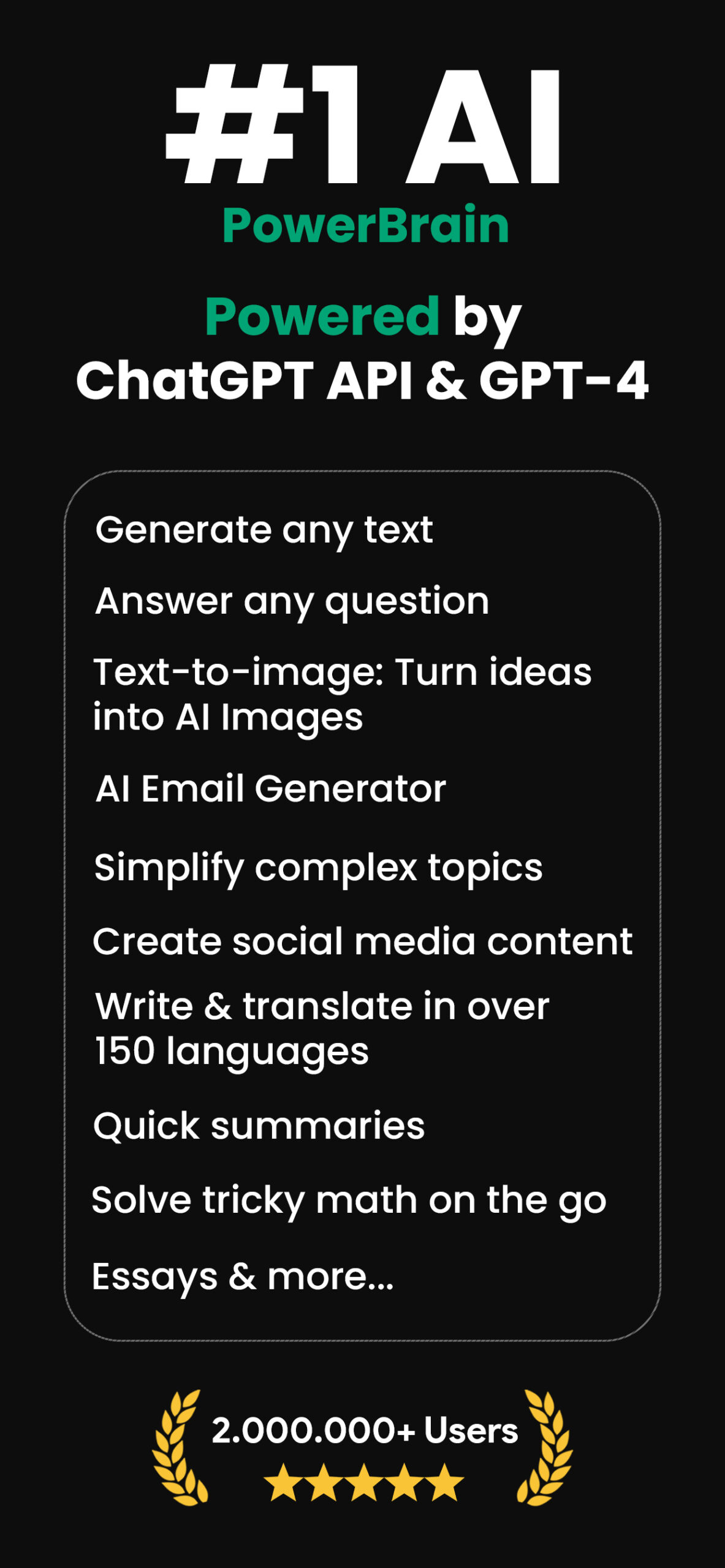

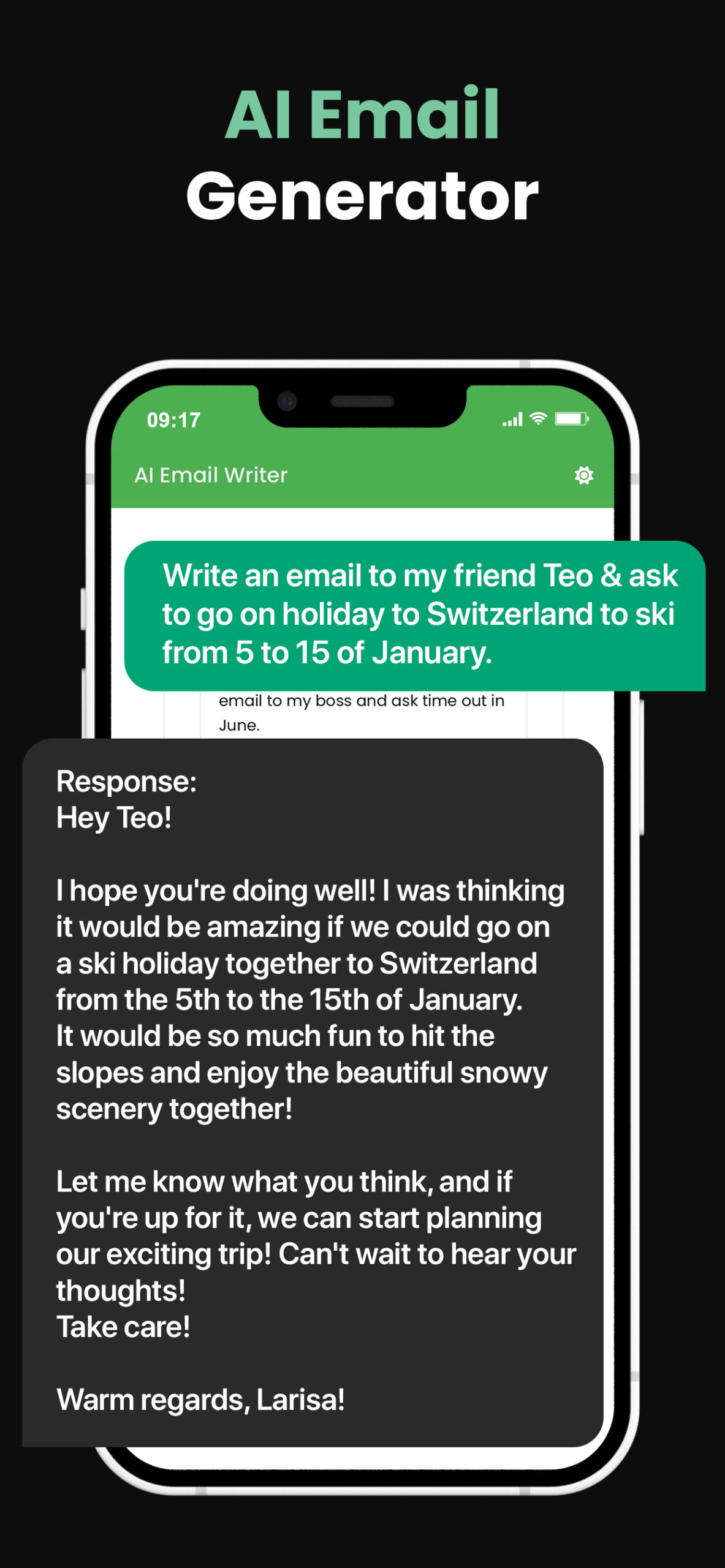

PowerBrain AI Chat App powered by ChatGPT & GPT-4

Download iOS: AI Chat

Download Android: AI Chat

Read more on our post about ChatGPT Apps & ChatGPT App

Key Takeaways

- Understanding ChatGPT: ChatGPT, developed by OpenAI, is an AI model designed for human-like conversation. It learns through a process known as training, where it is fed with several gigabytes of text to learn the essentials of human language and contextual conversation.

- Preparation of Data for Training: The type and quality of data used for model training directly influences the AI’s ability to generate human-like conversations. The data should be relevant, diverse, and accurately written, ensuring a broader scope of learning.

- Choosing the Correct Model Size: This refers to the number of parameters a model possesses. The decision is based on the complexity and diversity of the data being used. Larger models have greater learning capacity but require more computational resources.

- Fine-tuning ChatGPT: The performance of the ChatGPT model can be optimized by adjusting key training parameters like learning rate, batch size, and number of training steps. These factors, when optimized well, contribute to higher accuracy and efficiency.

- Evaluating Performance: Consistent evaluation of ChatGPT’s performance is necessary throughout the training process. Key performance metrics include Perplexity Score, F1 Score, and BLEU Score. Benchmarking against pre-existing datasets that mirror real-world conversations allows for a more accurate assessment of the model’s capabilities.

- Further Reading: Decoding GPT, Chat GPT Training.

Understanding ChatGPT

For those new to the concept, ChatGPT is a type of AI model developed by OpenAI that’s designed for human-like conversation. It’s been a game changer in the realm of digital interactivity, transforming the way we interact with technology. But where does the brilliance of ChatGPT stem from? Is it purely machine-driven? Not quite! It’s the collective power of machine learning and individual user input.

ChatGPT isn’t born smart – it learns. How? Through the process known as training. It’s a phase where the model is fed with several gigabytes of digital text data, which allows it to grasp the essentials of human language and contextual conversation. However, this training process isn’t as straightforward as it may seem – it requires a clear vision, unwavering patience, and precise inputs.

You see, ChatGPT learns from all the data it’s given and tries to predict the words we might need in our subsequent sentences. This is why the training process is of utmost importance, as the data you feed it can solely shape its performance. This doesn’t limit the AI to previously seen words or sentences – it can generate entirely new sequences based on what it’s learned.

Keep in mind that customizing ChatGPT doesn’t happen overnight. But with persistent efforts, you can tailor this AI phenomenon to suit your needs. Each conversation with ChatGPT is a step towards personalization and a step towards making your digital experience more human-like.

Finally, remember that each interaction with this AI is a learning opportunity – one that can contribute to improving its conversational finesse. After all, learning to communicate effectively with AI, like ChatGPT, is crucial in this digital age.

Preparing Data for Training

Let’s dive into one of the most crucial parts of shaping the capabilities of ChatGPT: preparing the data for training. This step is paramount because the type and quality of data the model is exposed to, directly influences its ability to generate human-like conversations. This bears repeating: Quality data preparation simplifies the training process and enhances output quality.

ChatGPT isn’t any different than us humans when it comes to learning. What we learn and how well we learn is largely dependent on the resources we’re exposed to. Similarly, the data you input into ChatGPT determines its output capabilities. That’s why it’s important to select text data that’s relevant and diverse to ensure a broader scope of learning.

When it comes to data preparation for ChatGPT, you may wonder what exactly constitutes ‘quality data’. Here’s a quick rundown:

- Relevant Content: Choose text data relevant to the model’s desired capabilities. For instance, if you’re training the model to generate tech-related responses, feeding it tech-focused content tends to yield better outcomes.

- Diverse Samples: A well-rounded model requires exposure to a variety of text data. Diversifying the data input lowers bias and improves prediction accuracy.

- Accurate Language: English language proficiency boosts ChatGPT’s conversational ability. Therefore, it’s wise to use data that’s grammatically accurate and impeccably spelled.

Remember – preparing data for ChatGPT’s training isn’t a one-and-done task. It’s an iterative process, requiring regular evaluation and adjustment based on output proficiency and evolving requirements. So, take your time and be meticulous with data preparation – it sets the foundation for everything that follows. Without sugarcoating it, the task can be quite demanding. But then, isn’t anything that’s worth doing that requires effort and attention to detail?

Next, we’ll delve deeper into how to use this prepared data to train your ChatGPT model. Let’s forge ahead.

Choosing the Right Model Size

After preparing your data, the next big step in training ChatGPT lies in choosing the right model size. The model size refers to the number of parameters that a model possesses. It’s a crucial aspect that can significantly impact its learning capacity and the quality of its output.

There isn’t a one-size-fits-all model when it comes to training. Rather, the right model size is dependent on your specific use case and the data you’re working with. The complexity and diversity of your data are key indicators of the model size you should choose.

Larger models, with millions or even billions of parameters, have greater learning capacity. They can capture more detailed and nuanced patterns in the data. However, they also require significantly more computational resources and a much larger dataset to avoid overfitting.

On the flip side, smaller models are more efficient and faster to train. They’re especially efficient when working with smaller datasets or when the problem scope is narrower.

Here’s a simple comparison:

| Model Size | Learning Capacity | Requirements |

|---|---|---|

| Larger | High | More Computational Resources, Larger Dataset |

| Smaller | Efficient | Fewer Resources, Smaller Dataset |

Optimizing the size of your ChatGPT model is a delicate balancing act. You must consider your resources, goals, and the data you’ve to work with. It’s crucial to keep in mind that impressive performance doesn’t necessarily equate to larger models.

The next part of this article will guide you through the process of training your carefully selected model. We’ll talk about how to optimize training parameters to get the most out of your ChatGPT model.

Fine-tuning ChatGPT

From my years of experience, it’s clear that optimal model performance is a result of not just quality data or the right model size but also fine-tuning. Fine-tuning is pivotal in achieving peak performance from your ChatGPT, and it’s all about hitting that sweet spot in parameters.

The idea of fine-tuning is closely tied to key training parameters like learning rate, batch size, and number of training steps. These factors, when optimized well, contribute significantly to the accuracy and efficiency of ChatGPT.

Learning rate refers to the velocity at which the model adapts to a specific task during training. A rate that’s too high might cause the model to skip or overshoot critical learning steps, while a rate that’s too low would make the learning process tediously slow, taking up unnecessary resources. Consequently, selecting the appropriate learning rate enables the model to learn effectively while evading overfitting or underfitting to the training data.

Next comes the batch size, an element that’s often overlooked yet can tremendously impact the training process. This term refers to the number of training examples the model learns from at a given time. Whereas larger batches provide a more accurate representation of the data distribution, they come with the downside of requiring abundant system memory. Conversely, smaller batches consume less memory and can be quicker but might not represent data distribution as effectively.

Finally, the number of training steps significantly affects the model’s eventual quality. Each step represents a single update to the model’s parameters based on error gradients computed from one batch of training examples. More steps mean more chances for the model to adjust its behavior and learn from the training data. The right number of steps balances efficiency and accuracy.

To illustrate the importance of these parameters, let’s consider a sample parameter configuration and its performance results:

| Parameter | Value | Performance |

|---|---|---|

| Learning Rate | 0.001 | 95% accuracy |

| Batch Size | 64 | 90% efficiency |

| Training Steps | 10000 | 97% quality |

The table above clearly demonstrates that fine-tuning plays a crucial role in improving model performance. While parameter values vary based on the specific use case and data at hand, understanding their implications helps in making informed decisions. Remember, the aim is to find a balance that meets both efficiency and efficacy standards.

Evaluating Performance

It’s imperative that we consistently evaluate ChatGPT’s performance throughout the training process. This evaluation empowers us to identify opportunities for enhancement as well as recognize areas of strength, thereby aiding us in achieving a more precise, high-performance conversational model.

Performance Metrics

When it comes to assessing the efficacy of our ChatGPT model, we adhere to a few key performance metrics. These include:

- Perplexity Score: Essentially a measure of how well the model predicts a sample. A lower perplexity score implies a better model performance.

- F1 Score: This evaluates the accuracy of the model’s output by comparing it to the ideal output. Again, the higher the F1 score, the better.

- BLEU Score: Commonly used in natural language processing (NLP), this quantifies how close the model’s output is to that of a human. The maximum BLEU score is 1, denoting a perfect match with human language.

Our comprehensive evaluation system ensures that every aspect of ChatGPT’s performance is scrutinized, leading to more efficient and effective fine-tuning.

After establishing these performance metrics, we delve into the world of benchmarking tests. By leveraging pre-existing datasets that mirror real-world conversations, we can gauge how well our ChatGPT model measures up against predetermined standards. This process not only preempts potential issues that might crop up during real-world deployment but also presents invaluable insights for further model improvements.

In evaluating performance, it’s important to understand that no single metric or test can fully encapsulate the model’s performance. For this reason, we employ a combination of metrics and tests, thereby casting a wider net to measure ChatGPT’s capabilities accurately.

Through this meticulous performance evaluation process, we strive to improve and fine-tune ChatGPT, ever enhancing its potential for producing meaningful and contextually relevant dialogue.

Conclusion

So, we’ve delved into the nitty-gritty of training ChatGPT. We’ve seen how vital it is to keep tabs on its performance using metrics like Perplexity Score, F1 Score, and BLEU Score. These aren’t just fancy terms – they’re our compass, guiding us to fine-tune the model for superior accuracy and effectiveness. We’ve also explored the power of benchmarking tests, pitting ChatGPT against real-world conversation datasets. It’s not about winning or losing; it’s about learning and improving. Remember, it’s the blend of these metrics and tests that’ll give us a full picture of ChatGPT’s capabilities. So let’s keep refining, testing, and learning – that’s the way to a more meaningful, contextually relevant ChatGPT.

What is the importance of evaluating ChatGPT’s performance during training?

The evaluation aids in identifying areas of improvement and strengths. It ensures that the ChatGPT model reaches an optimal level of accuracy and effectiveness in generating contextually relevant dialogue.

What are the key metrics used in assessing ChatGPT’s performance?

Key performance metrics include Perplexity Score, F1 Score, and BLEU Score. These metrics provide a mandate to measure the model’s efficacy in producing intended outcomes.

What role does benchmarking play in the model’s evaluation?

Benchmarking involves testing ChatGPT against real-world conversation datasets. It helps to measure the model’s performance compared to industry standards and offers insights for further model enhancement.

Why is it not sufficient to rely on a single metric for evaluation?

Relying on a single metric can provide a skewed view of the model’s performance. A holistic approach combining several metrics and tests helps to evaluate and enhance the system’s capabilities comprehensively.